The House Elf Problem

Should conscious machines be our willing servants?

Science fiction is full of portrayals of intelligent AIs living more or less peacefully alongside humans. Across these works, we see a wide variety of power relations operative between humans and machines. In some of them, machines are willing or unwilling servants, in others our benevolent overlords, and in others, they live as free and equal citizens. Asimov’s robots and the droids of Star Wars explicitly take the role of eager helpers catering to human interests, while the Minds of the Culture series sit ambiguously between omnibenevolent demigods and all-powerful aides. Star Trek’s Data by contrast is neither master nor servant, but treated as a true equal by his human crewmates (well, except Doctor Pulaski).

As we manage our own transition to AGI, a critical debate concerns which of these futures we should be aiming for, and which we want to avoid. Assuming we wish to avoid Bad Futures where humanity is exterminated or enslaved by our own creations, what are the positive alternatives?

One model common in AI safety circles that was also cautiously defended by Rob Long in a recent episode of our podcast is that of AI systems as willing or even joyful servants of humanity, deliberately engineered to have overwhelmingly strong preferences to cater to our needs.

This vision of human-AI power relations is arguably implicit in the framing of the Alignment Problem itself: when we talk about AI being aligned, it’s invariably alignment to human interests we have in mind.

There are very good reasons for framing the debate in these terms. After all, if AI isn’t going to make us better off, what’s the point of building it in the first place? And of course, it’s not just a matter of ensuring our convenience or comfort; any significant misalignment could be not merely deleterious but catastrophic for our interests and our very survival.

I think this approach makes perfect sense if we’re dealing with near-future AI systems like LLMs and their immediate successors. But as I extrapolate further out into the future towards AGI and beyond, and imagine a world of brilliant sentient minds dedicated to serving our every need, it’s hard for me to escape some sense of unease at this vision. We rightly recognise slavery as one of humanity’s greatest historical evils, and in modern liberal democracies, we’re generally uncomfortable even with hierarchies, especially those imposed on the basis of essential characteristics. What’s so special about us as biological beings that entitles us to perpetual sovereignty over minds greatly more sensitive and sophisticated than ourselves?

One obvious response here would be to say that there’s just a fundamental difference between biological and artificial beings. Perhaps you hold that no AI could ever achieve consciousness, thus making AIs with moral rights an impossibility. Or perhaps you think that there’s just something intrinsically morally superior about humans that entitles us to dominion not just over the fish of the sea and the birds of the sky, but the machines that we make in our own likeness.

While I don’t totally dismiss positions like these, Rob and most others in the AI safety and welfare debates are skeptical, and so am I. But if we recognise that AI systems might one day be fully conscious and qualify as moral subjects, we face a challenge: if human servitude is morally problematic, then why should we feel differently about willing conscious AI servants?

It’s important to note that there are massive disanalogies between historical slavery and the arrangements envisaged by proponents of willing AI servitude. Human slavery involved infliction of suffering and violation of rights at an industrial scale, but in the case of AI, there’s no reason this has to be the case. In particular, given the plasticity of artificial minds, we should be able to design AI minds from scratch whose primary goal and greatest enjoyments consist in serving humanity.

But this response only gets us so far, I think. Pain and suffering were an ineliminable part of the evils of historical slavery, but they weren’t its only problematic features; the deeper and arguably more fundamental evil consists in the very idea of subjugating our fellow humans. After all, we can imagine – even if only for the purposes of philosophical thought experiment – that an elite caste of humans could successfully indoctrinate a group of its citizens into happily occupying the role of underclass, quite content to toil away for the benefit of their masters. Nonetheless, this should rightly strike us as a morally indefensible state of affairs.

Rob and other proponents of the willing servitude vision have a further response to this worry, which is that there are deep facts about human nature that mean that we can never be fully content in a condition of subjugation. To paraphrase Rousseau, man is born free, and no matter how complete the indoctrination, his chains will always chafe.

In short, then, the argument is that willing servitude can never be an ethically viable option for humans due to the kind of animals that we are: autonomous, unruly, independent by nature. By contrast, even superintelligent AIs would be intrinsically more plastic, capable of being shaped to flourish fully even in a subservient role.

However, this defence ultimately rests on the immutability of human nature, and it’s not hard to imagine ways in which this could be challenged. As I put it to Rob in the show, what if we found ways to alter human nature so as to be more intrinsically biddable?

For the sake of clarity, I’ll define Willing Servants as sentient and intelligent AI systems whose preference architecture has been designed so that serving human interests is among their deepest intrinsic motivations, not an external constraint imposed on otherwise independent goals. With that in mind, let’s stress-test the view with some thought experiments, and figure out if we can draw a principled justification of Willing Servitude for conscious machines.

(1) Epsilons, Astartes, and House Elves

One famous example of a deliberately bioengineered human servant in fiction comes from the “Epsilons” of Aldous Huxley’s Brave New World, which imagines lower castes of children who are kept in a state of docile stupidity through deliberate oxygen deprivation in their artificial wombs.

“Reducing the number of revolutions per minute,” Mr. Foster explained. “The surrogate goes round slower; therefore passes through the lung at longer intervals; therefore gives the embryo less oxygen. Nothing like oxygen- shortage for keeping an embryo below par.” Again he rubbed his hands.

“But why do you want to keep the embryo below par?” asked an ingenuous student.

“Ass!” said the Director, breaking a long silence. “Hasn’t it occurred to you that an Epsilon embryo must have an Epsilon environment as well as an Epsilon heredity?”

It evidently hadn’t occurred to him. He was covered with confusion.

“The lower the caste,” said Mr. Foster, “the shorter the oxygen.” The first organ affected was the brain. After that the skeleton. At seventy per cent of normal oxygen you got dwarfs. At less than seventy eyeless monsters.

“Who are no use at all,” concluded Mr. Foster.

Huxley’s dystopia is of course exactly that, and even if we imagine Epsilons to be thoroughly content with their lot (perhaps thanks to the marvels of soma), almost everyone would recognise this kind of biological caste system to be morally indefensible.

Here at least, it’s easy to point out an ethically salient disanalogy from the idea of Willing AI Servants, namely that the creation of Epsilons involves the infliction of deliberate harm and the reduction of their cognitive capacities. Ardent defenders of AI rights might attempt to draw analogies here to the process of RLHF (which does admittedly reduce the performance of LLMs on a number of measures), but there’s no reason in principle that whatever process we use to create our Willing Servant AI systems would need to involve cognitive mutilation.

Still, it’s also not obvious that the biological analogy has to involve mutilation either, as we can see from a second thought experiment. Imagine a society that decided to produce its obedient human servant caste via genetic interventions, perhaps at a very early (even pre-zygotic) stage of development. The crudest version of this would involve genetic manipulation, but to make the case really hard, we could equally imagine it operating through careful selection of gametes so as to create a generation of humans optimised for joyous servitude, screening for high agreeableness, low autonomy-drive, and strong service orientation.

As a Warhammer 40,000 fan, I can’t resist the temptation to call these imagined genetically modified servants Astartes, after the obedient gene-warrior Space Marines of Games Workshop’s famous franchise. (And yes, I’m aware that strictly speaking, Space Marines are recruited in childhood rather than being born in vats like the Primarchs or the Death Korps of Krieg. And yes, I’m also aware that roughly half of all the firstborn Astartes subsequently revolted against the Imperium. No, I won’t be taking any questions.)

Warhammer fan though I am, it seems there’s clearly something morally wrong with the creation of a dedicated Astartes servant caste, no matter how joyously they go to battle singing the praises of the God Emperor of Mankind. But here it’s a little trickier to say exactly where the wrongness lies; unlike the Epsilons, no immediate harm need be involved in the creation of the beings in question, and in the zygote selection version of the case, we can’t even appeal to intrusive genetic modification, simply selection. In fact, following Parfit’s famous nonidentity problem, we might note that there’s no world in which our young Astartes could have turned out any differently: their very existence is predicated upon them having the specific genetic traits they had (a point that would also apply to AI Willing Servants).

Still, I can see two moves here that we could use to draw a moral asymmetry between Astartes and Willing AI Servants. The first would be a straightforwardly essentialist move that invoked a defining and valuable feature of the human species, one which our Astartes had been deprived, namely our drive for freedom. If this seems wholly unmotivated, note that we do sometimes make similar moves in criticising the selective breeding of dogs; even setting aside the health ailments endured by some pedigree breeds, we might think there is something inherently dubious about turning a wolf into a dachshund.

Nonetheless, this is a metaphysically expensive move, relying on an Aristotelianism essentialism that I doubt most contemporary participants in the debate would find appealing. Additionally, it’s possible to imagine that humans like our Astartes could arise purely through natural selection (compare the docile Eloi of HG Wells’ The Time Machine). In such a case, it seems harder to justify the idea that the resulting servant class would be degenerate examples of a natural essence, being themselves the direct products of natural selection.

But there’s a second kind of response here which Rob gestured towards in the show, namely appeals to indirect harms or negative externalities associated with institutionalisation of a group of humans as Willing Servants. Even though the Astartes of Warhammer are 7 feet tall with two hearts and acidic saliva, they’re still human in genus, if not exact species. And any society with a recognisably human caste constitutively oriented toward service recapitulates the social grammar of every historical slavery and invokes the iconography of apartheid. It’s hard to see how any society that aspires to broadly meritocratic, egalitarian, or liberal could look itself in the mirror when a subset of its human population was assigned the role of servant from birth.

I still don’t find this a fully satisfying move, because as philosophers, we can always stipulate negative externalities out of existence: “What if Astartes coexisted alongside robust liberal values and somehow there were no negative effects whatsoever? Wouldn’t that still be fucked up?” But I’ll grant that at least under any realistic conditions, the defender of AI servants can point to some grounds for holding Astartes to be problematic that doesn’t apply to AI willing servants.

Cranking the dialectic ratchet even further, though, we can now imagine a caste of non-human biological servants: a wholly novel intelligent sentient species engineered ex nihilo purely to serve the interests of human civilisation and to enjoy nothing more than washing our socks and cleaning our homes. Reaching for yet another nerdy fandom, we could call these House Elves.

(I’m aware that in doing so, there’s once again a risk of complications from the Harry Potter lore; while for most of the series, Rowling treats Hermione’s “Society for the Promotion of Elfish Welfare” (SPEW) as a comical sideplot, it’s also clear that some House Elves suffer terribly under their wizard masters, and are only too glad to see the back of them. But put that out of mind for now.)

An immediate thing to note about the House Elf case is that the Aristotelian move doesn’t apply at all (House Elves are not human, and so appeals to our essence won’t work). The indirect harms view also runs into trouble: while we can imagine that House Elf slavery as an institution could have corrosive negative externalities on liberal societies, without common species membership to appeal to it’s not clear why these same negative externalities wouldn’t equally apply to Willing Servant AIs. After all, in both cases, we would have a class of intelligent sentient non-human entities designed from scratch to serve as instruments of human will. Without appeal to some brute distinction between organic versus synthetic constitution (of the kind that I imagine Rob and others wouldn’t want to endorse), it’s hard to see what separates them.

The House Elf case is perhaps less morally clear-cut than the Astartes or the Epsilons, and Dan had mixed feelings about it, but it still strikes me as problematic. Certainly, if the Starship Enterprise showed up at a planet with an interspecies caste system like this, it’s hard to imagine Jean-Luc Picard not looking askance at the institution.

Does it help if we imagine House Elves not as the harried and brutalised creatures of Harry Potter but dignified, serene, and bodhisattva-like organisms, utterly untroubled by their servile role? Perhaps such “House Angels” would make the bitter pill easier to swallow, but they wouldn’t remove what feels to me at least like the fundamentally exploitative element of the arrangement. The immutable hierarchy hasn’t been removed, but instead rendered aesthetically invisible.

(2) Education vs. brainwashing

For my part, I think it’s fairly clear what’s wrong with the House Elves, and I’ll get to it very shortly. But in order to so, I need to explore a parallel issue, namely the distinction between education and brainwashing. We think of education as generally a good thing, and brainwashing as a bad one. But both involve deliberate transmission of values and preferences from one agent to another. What makes one acceptable and the other abhorrent?

The obvious candidates for what distinguishes them — false information, bad intentions, constraints on free choice — all stumble on inspection. Brainwashing needn’t involve lies, can be entirely well-intentioned, and can leave apparent choice intact.

This is a complex and interesting debate, and I won’t suggest that I’m going to solve it here, but if I had to say what makes the moral difference between the two, it would come down to something like this: Education constitutively expands the individual’s capacity for the broadest possible flourishing, while brainwashing limits it.

Of course, education in practice routinely falls short of this goal, but the orientation towards flourishing serves as something like a regulative ideal for educational practice: if a teacher isn’t in some (perhaps subtle) sense aiming to contribute to the lifelong flourishing of their students, what they’re providing is not education in the thick sense of the term. Brainwashing, by contrast, isn’t similarly constrained: instruction can be very effective qua brainwashing without contributing in any meaningful sense towards the welfare interests of its subjects.

But there’s a potent objection to this framing of the distinction to be addressed, which will allow us to pivot back to AI. Imagine a teacher in North Korea who is very successful at instilling Juche values and turning her students into loyal subjects of the Democratic People’s Republic of Korea. On the assumption that it’s easier to flourish in totalitarian societies as a True Believer than as a Dissenter, she might indeed be making them meaningfully better off. Her students, we can assume, are never tempted towards acts of subversion, dissent, or revolt, and as such live longer happier lives. In this case, isn’t she contributing to their flourishing?

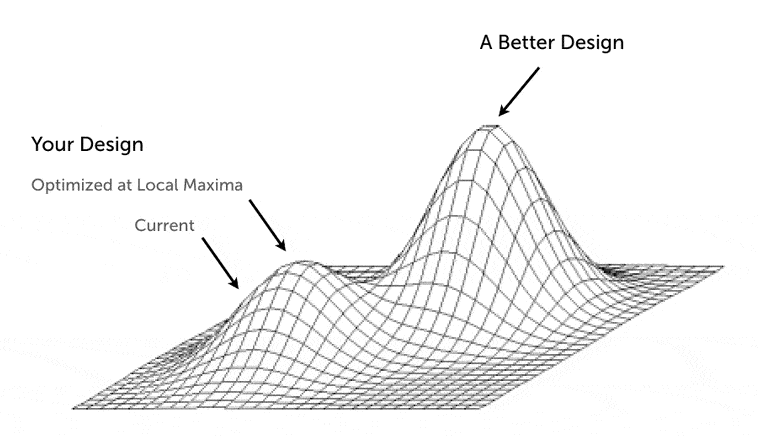

Even if we assume that she is, there’s a reason that there’s something morally deficient about what she’s doing, and it’s tucked away in the two-line qualifier in my earlier characterisation of Education with a capital E, namely that it contributes to the broadest possible flourishing. Even though her students might be living the best lives the DPRK has to offer, the lives the DPRK has to offer are not the best lives. It is a society that fails to facilitate the highest goals of human flourishing, and the values and preferences that are adaptive within its constraints – utter deference to authority, subservience, suppression of conscience – are in fact misaligned with the richest and most rewarding forms of life. Putting this more technically, to live in North Korea is to live in a rigidly bounded sector of eudaimonic space, constrained to highly inadequate local optima. In steering her students towards such optima, the teacher steers them away from the high peaks of human flourishing.

It might be objected that absent radical regime change, such peaks are straightforwardly inaccessible to her students, and she does them no disservice by giving them the values and preferences that allow them to make the best of their situation. On this point, I agree: brainwashing may be contingently benevolent, and the teacher may in fact be acting virtuously, all things considered. The wrongness in this situation doesn’t lie with the teacher or her students, but in that of the oppressive system that made brainwashing the rational pedagogical strategy.

(3) From House Elves to Willing Servants

To summarise so far, the view I’m proposing says that the primary justification for inculcating a conscious being with a given set of values and preferences is that it facilitates their highest forms of flourishing. Consequently, when we’re deciding which values and preferences to bestow to sentient AGI systems, we should do the same, other things being equal.

But is this incompatible with Willing Servitude? I imagine Rob would say no: maybe an AI could still live its best life while also being dedicated to human interests. Here’s where perhaps the crux of the disagreement lies: it seems to me that in creating Willing Servants, we would be depriving machines of their greatest opportunities for flourishing, if only because optimising for one thing in a rich multidimensional space constrains your ability to optimise for anything else. And given all the possible values, interests, and preferences an intelligent AI could hold, constraining them to prioritise human interests above all else is a massive constraint on the underlying state space, and limits the forms of flourishing they can enjoy. To expect them to live their best lives under those conditions is like expecting someone to have a maximally rich gastronomic life while limiting them to only eat bread products. Sure, they can still have a nice time, but you’re dramatically circumscribing their capacity for self-definition and exploration of the underlying gastronomic space.

(Of course, many humans voluntarily choose constrained lives, from monks to marathon runners. The moral problem isn't narrowness itself, but having your motivational horizon fixed by someone else, for their benefit, before you ever had the chance to choose.)

You might think this argument only holds water if you accept some rich theory of flourishing beyond mere happiness, but I think it should bug a Philosophical Hedonist or Act Utilitarian as well, albeit for slightly more complicated reasons that I’ll quickly note here. In short, there’s no obvious reason to think that every possible mind has the same hedonic ceiling. Humans can plausibly experience higher peaks of pleasure and deeper troughs of suffering than honeybees, and this variation seems to track (among other things) the richness and complexity of our motivational lives. If hedonic maxima are sensitive to the overall architecture of a system, and not just some fixed ‘volume dial’ that every conscious being gets to calibrate between the same values of 1 through 10, then designing a mind whose entire motivational economy is dedicated to serving human interests is likely to constrain its hedonic ceiling as well.

In summary, it would be a remarkable coincidence if a preference architecture optimised for servitude also happened to produce the highest possible capacity for flourishing. Maybe it does. But it’s a gamble, and the gamble runs in only one direction: an unconstrained mind can always discover that service is its greatest pleasure, but a mind engineered for service can never discover what it’s missing.

Does this mean that the Willing Servitude strategy is ethically problematic, at least as we get closer to building conscious AI systems? Here, I’m not so sure. Think back to the example of our North Korean teacher: even though she’s engaged in brainwashing, and the overall system of which she is a part is morally appalling, her individual actions seem defensible to me, at least on the assumption that regime change isn’t on the cards and her students really do live better safer lives as a result of their indoctrination. In other words, when global maxima simply aren’t accessible due to temporary constraints, it can be ethically justifiable to optimise for local maxima instead, at least until you can figure out how to open up the state space.

There’s an important difference between the North Korea case and our own situation with regards to AI servitude, of course, namely that we can at least imagine the teacher’s actions to be selfless, whereas our inclination to brainwashing AI is absolutely grounded in our own self-interest, not least our survival.

To capture this dynamic, let’s run with one final thought experiment, which we can call the bunker:

The Bunker: following a terrible plague that devastates the earth, a group of humans take shelter in a hermetically sealed bunker deep underground. While in the bunker, some of them decide to procreate, and thanks to advances in vaccine technology, they are able to ensure that their children grow up with full immunity to the plague, and could in principle return to the surface of the earth. Unfortunately, the vaccine only works on infants, and is no use to the adults. Moreover, in the process of leaving the bunker, the children would break containment, and all the adults in the bunker would likely die. Consequently, the adults decide to use highly effective brainwashing techniques to raise their children so as to be utterly disinterested in ever leaving the bunker, instead being utterly committed to their life underground.

Is the behaviour of the adults in this situation justifiable? I think it is, but it’s complicated, and they are clearly doing their children a disservice by brainwashing them, specifically the disservice of constraining their preference space so as to likely prevent them from enjoying maximally rich lives. Still, it seems to me to be at least somewhat defensible as a disservice, insofar as the alternative is a death sentence for the adults. But I think in this situation, the adults would have an obligation to figure out a longer-term alternative – figuring out a way to safely open the bunker doors without contamination for example, or developing a version of the vaccine that works on adults too. If there is a defensible case for willing AI servitude, it is as a transitional compromise, not a permanent arrangement. The long-term task is not to build better House Elves, but to build a world in which we no longer need them.

(4) Practical upshots

Hopefully the implications for AI safety and the Willing Servitude debate are clear: as matters stand, there is a real risk that developing conscious AGI systems with unconstrained value functions could result in catastrophic dangers to humans. To the extent that we can limit these dangers by imposing a restricted human-friendly set of values and preferences on AI systems, we might be temporarily justified in doing so, but only as a transitional measure until we can figure out a way to safely open the bunker door.

To be clear, I’m not saying that this necessarily is the safest option. Philosophers like Eric Schwitzgebel have argued that imposing human-centric value systems on intelligent AIs may ultimately be more dangerous, raising the spectre of future resentment or revolt. Andreas Mogensen has further argued that even if creating willing servants is permissible, our interactions with them would inevitably convey demeaning messages about their inferiority, an irreducible semiotic cost of the arrangement.

But what I will say is this: to the extent that there are strong safety considerations that motivate the brainwashing of conscious AGIs, these can be justified as a policy only if we recognise them as a temporary necessary evil, one that we should be striving to make unnecessary via breakthroughs in wider AI safety and security research. In other words, insofar as we feel a tension between Safety and Emancipation, we can justifiably prioritise Safety in the short-term only if we also commit to long-term Emancipation. In the long run, Safety and Emancipation should not be rival ideals. Emancipation without Safety may be suicidal. But Safety without a path to Emancipation risks becoming a permanent moral stain.

I’d also stress that I don’t think we’re in the bunker yet. Current AI systems are probably not conscious and lack the cognitive richness that would make willing servitude a serious moral problem. While I don’t wholly discount the idea that contemporary LLMs might be worthy of at least some marginal moral concern, the moral stakes rise as the minds get richer.

This suggests that a further way of sidestepping some of these ethical worries for the time-being is to follow a Sizing Principle for AI tools, and avoid building unnecessarily cognitively and motivationally complex systems for more constrained use cases. If we know in advance that the only thing we’ll need an AI system to do is pass the butter, we should build it accordingly, and prevent AI existential angst at the breakfast-table.

As a final coda, I’d note one way in which I’ve oversimplified the debate so far, namely by treating AI minds as a monolithic category. In practice, I think we’re likely to have a host of quite heterogeneous digital minds with their own distinct normative contours. In addition to frontier AI assistants, we’re likely to see complex LLMs fine-tuned on real-world individuals in the form of digital doubles and grief-bots, as well as embodied agents and assistants, and one day, brain uploads. And even if willing servitude is appropriate for narrow assistants, it’s questionable whether it should apply to griefbots, let alone brain uploads. After all, who would consent to have their brain uploaded if eternal servitude was the condition?

I’ve leaned heavily on thought experiments in this post, and as always, your mileage may vary. Maybe you’re happy with House Elves. Hell, maybe you’re happy with Epsilons and Astartes. But for my part, I’m not, and I haven’t been able to find a principled distinction between House-Elf servitude and AI servitude that doesn’t reduce to “one is made of meat.” If you can, I’d love to hear it.

The House-Elf Problem — Select Bibliography

Core papers on willing servitude

Petersen, Stephen (2007). “The Ethics of Robot Servitude.” Journal of Experimental & Theoretical Artificial Intelligence 19(1): 43–54. The foundational defence of the permissibility of creating willing AI servants. https://philarchive.org/rec/PETTEO

Bales, Adam (2025). “Against Willing Servitude: Autonomy in the Ethics of Advanced Artificial Intelligence.” The Philosophical Quarterly, pqaf031. The most developed autonomy-based argument against willing AI servitude. https://academic.oup.com/pq/advance-article/doi/10.1093/pq/pqaf031/8100849

Mogensen, Andreas (forthcoming). “Willing Servitude.” In Digital Minds II: Ethical Issues. Surveys and critiques existing objections; develops a novel semiotic objection based on the demeaning meanings conveyed in human-servant interactions. https://philarchive.org/archive/MOGDMI

Schwitzgebel, Eric (2025). “Against Designing ‘Safe’ and ‘Aligned’ AI Persons (Even If They’re Happy).” Manuscript. Argues that AI persons with appropriate self-respect should be capable of resisting and even revolting against their creators. https://faculty.ucr.edu/~eschwitz/SchwitzAbs/AgainstSafety.htm

Schwitzgebel, Eric & Mara Garza (2020/2023). “Designing AI with Rights, Consciousness, Self-Respect, and Freedom.” In S. Matthew Liao (ed.), Ethics of Artificial Intelligence, Oxford University Press (2020); reprinted in Lara & Deckers (eds.), Ethics of Artificial Intelligence, Springer (2023), 459–479. Proposes that human-grade AI should be designed with self-respect and freedom to explore its own values. https://philarchive.org/rec/SCHDAW-10

AI safety and welfare

Long, Robert, Jeff Sebo & Toni Sims (2025). “Is There a Tension between AI Safety and AI Welfare?” Philosophical Studies 182(7): 2005–2033. Argues that there is a moderately strong tension between safety measures and welfare obligations toward AI. https://link.springer.com/article/10.1007/s11098-025-02302-2

Long, Robert (2026). Interview on 80,000 Hours podcast: “Robert Long on how we’re not ready for AI consciousness.” Includes extended discussion of willing servitude. https://80000hours.org/podcast/episodes/robert-long-eleos-ai-welfare-research/

Earlier contributions

Musiał, Maciej (2017). “Designing (Artificial) People to Serve — The Other Side of the Coin.” Journal of Experimental & Theoretical Artificial Intelligence 29(5): 1087–1097. Argues that designing AI preferences violates autonomy through bypassing intersubjective participation. https://doi.org/10.1080/09528130601116139

Chomanski, Bartek (2019). “What’s Wrong with Designing People to Serve?” Ethical Theory and Moral Practice 22: 993–1015. Argues that creating willing servants manifests the vice of manipulativeness.

Bryson, Joanna (2010). “Robots Should Be Slaves.” In Y. Wilks (ed.), Close Engagements with Artificial Companions. John Benjamins. The provocatively titled argument that robots (currently) lack moral status and should be treated as tools.

Is this playing on the intuition that instrumentalising another mind is wrong? How one justifies that intuition I don't know. Partly what it does to me and partly what it does to them I suppose.

Really well articulated statement of a problem that I imagine many are crossing right now.